If We Can’t Trust What We Read, What Does That Mean for AI in Schools?

Joan Jacobsen

Table Of Contents

Many of us feel a quiet fatigue when we’re online.

We click an article and wonder who wrote it.

We read a statistic and pause before believing it.

We scroll past confident statements that may or may not be grounded in fact.

A 2025 Britannica survey put words to that feeling:

More than half of respondents believe the Internet has hurt their attention span.

Nearly 60 percent say they struggle to determine whether what they’re reading is truthful.

Plus, a significant number believe much of what’s online is simply false. With so much noise, it’s difficult to determine whether your AI tools are classroom-ready.

This isn’t outrage. It isn’t panic. It’s something more subtle. It’s erosion. And that erosion doesn’t stop at the edge of the classroom.

Students and teachers live inside the same information environment as everyone else—fast-moving, crowded, often unclear. Educators don’t resist technology, but they do carry the heavy responsibility of helping students think clearly in a world that can make clarity challenging.

Now AI has entered that ecosystem.

AI can summarize instantly. It can generate explanations in seconds. It can adapt text and create content at extraordinary speed. The potential is real, but so is the underlying question: If we already struggle to trust what we read, what happens when content is generated from dubious information?

A Trustworthy, Teacher-Friendly Experience

This isn’t a call to reject AI. Most educators are curious about it. They see how it could reduce prep time, support differentiation, and make planning more efficient. What they don’t want is more uncertainty. They don’t want to double-check every output. They don’t want invisible sourcing. And they definitely don’t want tools that create more work under the promise of saving time.

During the beta study of Britannica Studio, in which nearly 300 educators used and provided feedback on the new teacher-first AI workspace, something meaningful was captured: user confidence in output accuracy rose to 91 percent after sustained use. Not because the tool was flashy, but because it was transparent and predictable.

One teacher described the materials as “trustworthy and detailed,” especially compared to other AI tools that felt vague. Another educator called the experience “streamlined, reliable, and teacher-friendly.”

That distinction matters.

The broader survey tells us people don’t just want faster access to information. They want confidence in it. They want to know where it comes from. They want less second-guessing.

Classrooms deserve that too.

For more than 250 years, Britannica has operated from a simple premise: knowledge should be verified, accountable, and transparent. That principle becomes only more essential in the age of AI. Britannica Studio was developed with that foundation in mind.

Instead of pulling from the open web, Studio draws from Britannica’s verified, standards-aligned content. Instead of obscuring sourcing, Studio makes it visible. Instead of isolating tasks, Studio supports the full instructional workflow so that teachers can move from content to differentiation to assessment in one connected space.

The goal isn’t more content. It’s fewer doubts.

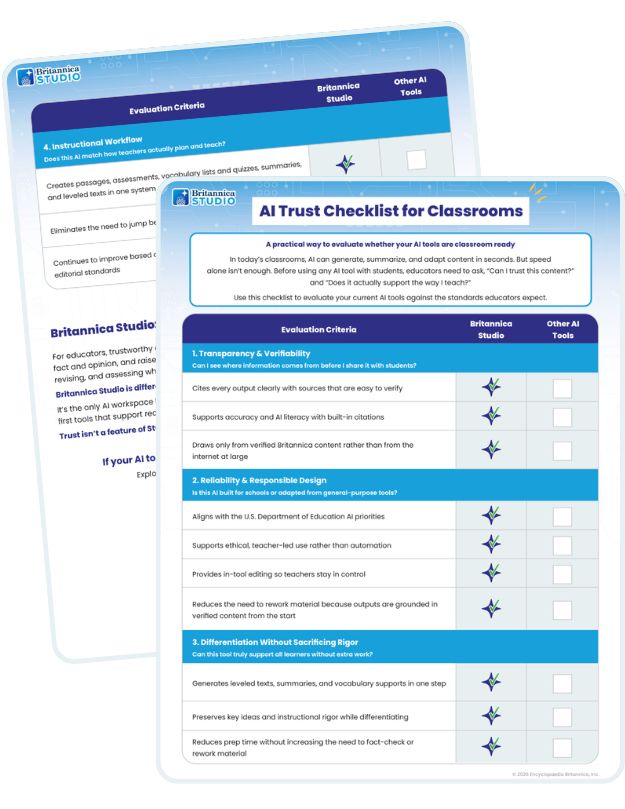

A Simple Way to Ask the Right Questions

As AI becomes more common in classrooms, the most important shift may not be technological, but reflective. Before educators adopt any tool, it helps to pause and ask:

Can I see where the information comes from?

Is this tool designed specifically for schools?

Will the AI support all learners without adding more work?

Does it fit the way teachers actually plan and teach?

These are professional instincts, not technical checkboxes.

To make those conversations easier, we’ve created a simple AI Trust Checklist for Classrooms, a short, practical guide educators can use to evaluate any AI tool against the standards they already expect.

The AI Trust Checklist for Classrooms focuses on transparency, responsible design, differentiation, and instructional workflow—the elements that quietly determine whether AI supports or complicates instruction.

The Internet may feel overwhelming at times, but classrooms remain steadfast. AI can either amplify the ambiguity of the open web or it can be built to counter it.

Though trust feels fragile online, it must be intentional in schools. As speed increases, clarity must increase with it.

In an era defined by information overload, steadiness isn’t old-fashioned. It’s leadership.

If you’re exploring how AI can support instruction without replicating the Internet’s trust challenges, you can learn more about how Britannica Studio was designed for this moment.

About the Author

Joan Jacobsen

Chief Product Officer

Joan brings more than 25 years of experience developing award-winning products for the classroom. She began her career as a classroom teacher before focusing on educational technology and product development. She has been instrumental in developing many high-quality, engaging solutions that support teachers and drive student success including CODE and EdTech Breakthrough Award winners.

Recent Posts

Join Our Newsletter

From tips and tricks to engaging activities, find

attention-grabbing content for tomorrow’s lesson.